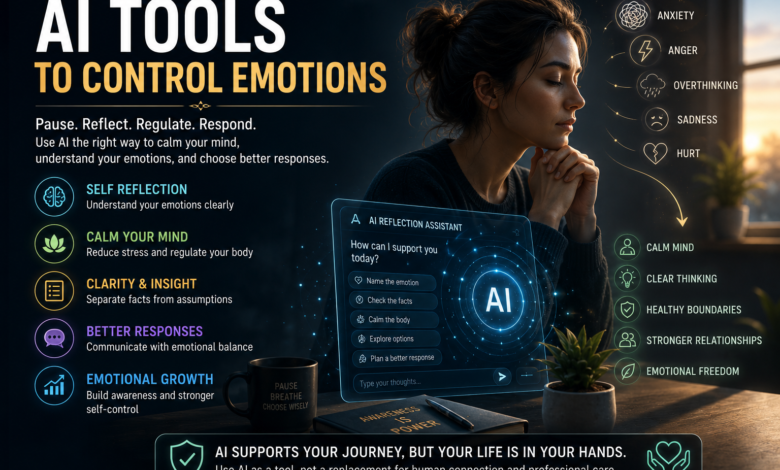

AI Tools to Control Emotions: Safe Ways to Pause Before Reacting

How AI Can Help You Calm Your Mind, Reflect Clearly, and Respond Better

Many people search for AI tools to control emotions because they want a safe pause before reacting. They may also use an AI chatbot for emotional support or AI self reflection prompts when anxiety, anger, overthinking, sadness, or relationship stress feels too heavy to organize alone.

Thank you for reading this post, don't forget to subscribe!This blog is different because it does not treat AI as a magic therapist, a replacement for human care, or another quick emotional escape.

Instead, it explains how AI emotional regulation tools, AI mental health tools, an AI chatbot for emotional support, AI self reflection prompts, and simple emotional regulation tools can help you slow down, name what you feel, separate facts from fear, calm your nervous system, and choose a mature response.

Many people search for AI tools to control emotions because they want a safe pause before reacting. In the USA, UK, Canada, and Australia, more readers are also exploring AI mental health tools and an AI chatbot for emotional support to understand anxiety, anger, overthinking, sadness, and relationship stress.

But this blog takes a careful BBH approach: AI should help you reflect, calm your nervous system, and choose better responses — not replace therapy, human care, or your own emotional responsibility.

AI Tools to Control Emotions: Safe Ways to Pause Before Reacting

Most people do not lose emotional control because they are weak. They lose control because the nervous system reacts faster than the conscious mind can understand.

One message, one tone, one memory, one rejection, one workplace pressure, or one relationship trigger can quickly move a person from calm thinking into emotional defense. In that moment, the real problem is not the emotion itself. The real problem is the speed of reaction.

This is where AI tools to control emotions can become useful when they are used correctly. They should not be treated as a therapist, a final authority, or a replacement for real human support. But they can help a person pause, name the emotion, organize thoughts, separate facts from assumptions, and choose a more mature response.

For readers in the USA, UK, Canada, and Australia, where digital mental health support is becoming more common, the important question is not only “Can AI help?” The better question is, “How can I use AI safely without becoming dependent on it?”

An AI chatbot for emotional support can be useful when it helps the reader pause, name emotions, and reflect before reacting.

Why People Search for AI Tools to Control Emotions

People often search for AI tools to control emotions when they feel emotionally overloaded but do not know what to do in the moment.

They may be angry after an argument, anxious before a decision, overwhelmed by work pressure, hurt by someone’s silence, or trapped in overthinking at night. In these moments, they are not always looking for deep therapy. Sometimes they are looking for a safe pause.

That pause matters. Many emotional mistakes happen in the first few minutes after a trigger. A person sends a harsh message, overexplains, shuts down, argues, cries alone, scrolls endlessly, or makes a decision from fear.

Later, when the nervous system settles, they may regret the reaction. This is why emotional control is not about becoming emotionless. It is about creating enough space between feeling and action.

For deeper background, BBH readers can also study healthy detachment and how it helps create distance from emotional pressure without becoming cold.

The Real Problem Is Not Emotion — It Is Fast Reaction

Emotion itself is not the enemy. Anger can show that a boundary was crossed.

- Anxiety can show that the mind is trying to predict danger.

- Sadness can show that something mattered.

- Hurt can reveal attachment, expectation, or unmet emotional need.

The problem begins when the emotion becomes so fast that the person starts believing every thought that appears inside it.

A triggered mind does not always ask, “What is true?” It often asks, “How do I protect myself right now?”

That protective mode may create assumptions, blame, panic, defensiveness, or withdrawal. In that state, even a small situation can feel urgent.

This is why tools that support reflection can help. AI emotional regulation tools can be useful when they help the reader slow down and ask better questions:

- What am I feeling?

- What actually happened?

- What am I assuming?

- What response will protect my dignity?

For a related BBH guide, read how detachment helps control emotions.

A Simple BBH Insight

Emotional control does not mean suppressing pain. It means learning how to stay conscious while pain is active.

- The goal is not to remove emotions instantly.

- The goal is to stop giving every emotion full permission to control speech, decisions, relationships, and identity.

Good AI self reflection prompts do not tell you to escape responsibility; they help you understand what you feel and what response fits your values.

“Clarity returned for me when I stopped asking AI to decide for me and started using it to slow my reaction.”

What AI Can and Cannot Do for Emotional Control

AI can be helpful, but only when its role is understood clearly. AI mental health tools can help organize thoughts, suggest calming exercises, support journaling, create reflection prompts, and help users look at a situation from different angles. For someone who is overwhelmed, this can feel grounding because the mind receives structure instead of more emotional noise.

But AI cannot truly know the full story of a person’s life. It cannot diagnose someone safely, replace a licensed therapist, provide emergency care, or understand the emotional complexity of trauma, abuse, crisis, or severe mental health symptoms. It may sound supportive, but supportive language is not the same as professional care.

This is the safety line readers must understand. AI can help with emotional reflection, but it should not become the only place a person brings their pain. Real healing still needs self-awareness, healthy relationships, professional support when needed, and honest action in real life.

AI mental health tools can support emotional reflection when they are used for journaling, grounding, and self-awareness rather than diagnosis or crisis care.

Readers who struggle with stress and emotional overwhelm may also find how detachment reduces anxiety and stress helpful.

AI Can Help You Name, Organize, and Reflect on Emotions

One of the most useful roles of AI is emotional naming. Many people say, “I feel bad,” but they do not know whether the feeling is fear, shame, rejection, anger, guilt, loneliness, disappointment, or helplessness.

- When the emotion is not named, the mind creates confusion.

- When it is named, the person gains a small amount of control.

This is where AI self reflection prompts can help. A person can ask AI to help them identify emotions, separate mixed feelings, or reflect on the meaning behind a reaction. This does not mean AI knows the absolute truth. It means AI can act like a structured mirror.

For example, instead of typing, “Tell me what to do,” a healthier prompt is: “Help me understand what I may be feeling and what assumptions I may be making.”

This keeps the user in responsibility. AI supports reflection, but the person remains the decision-maker.

When used carefully, an AI chatbot for emotional support can guide emotional awareness without replacing real human care.

This fits the BBH principle of conscious living, where awareness comes before action.

AI Cannot Replace Therapy, Crisis Care, or Human Support

The biggest mistake is using an AI chatbot for emotional support as if it is a therapist, emergency contact, or final emotional authority. AI may provide calming words, but it does not have human presence, clinical responsibility, emergency judgment, or full understanding of personal history.

If someone is experiencing thoughts of self-harm, danger, abuse, severe depression, panic attacks, trauma flashbacks, addiction crisis, or loss of control, AI should not be used alone. In those cases, the next step should be real human help, local emergency services, a crisis helpline, or a qualified mental health professional.

This boundary makes the article stronger, not weaker. Responsible content builds trust. For USA, UK, Canada, and Australia readers, the message should be clear: AI can support emotional organization, but it should never delay urgent care or replace professional guidance.

The safest AI self reflection prompts ask you to separate facts, assumptions, fears, and possible mature responses.

The safest use of AI is not dependency. The safest use is guided reflection that leads the person back to real-world support, better choices, and emotional responsibility.

The Safe Role of AI in Emotional Regulation

The best way to understand AI is this: AI can be a pause tool. It can help the reader slow the emotional storm long enough to think. It can suggest grounding exercises, help rewrite an angry message, organize a confusing conflict, or create a journaling question. These are practical emotional regulation tools, but they work only when the user stays aware.

AI should not be used to avoid accountability. It should not become a place where someone only seeks validation for anger, blame, revenge, or avoidance. A mature user asks AI for clarity, not permission to react. This difference is important.

For example, a reactive prompt says, “Prove that I am right.” A mature prompt says, “Help me see this situation more clearly and respond without disrespect.” That second prompt protects emotional maturity.

An AI chatbot for emotional support should help you slow down before replying, not push you into quick emotional decisions.

This is the unique BBH angle: emotional control is not only about calming down. It is about returning to conscious choice.

AI Works Best as a Pause Tool, Not a Final Authority

When someone is triggered, the mind often wants quick certainty. It wants to know who is wrong, what to say, whether to leave, whether to confront, or whether to protect itself. AI may give confident-sounding answers, but emotional life is rarely simple enough for instant conclusions.

That is why AI tools to control emotions should be used to create space, not to surrender judgment. The user can ask AI to slow the situation down, list possible interpretations, identify emotional triggers, and suggest calm responses. But the final choice must remain human.

This is especially important in relationships, parenting, workplace conflict, and family tension. AI can help draft words, but it cannot feel the consequences of those words. The user must still bring wisdom, values, timing, and responsibility.

The goal is not to let AI control emotions for you. The goal is to use AI to help you return to yourself before emotion controls you.

The Better Question to Ask AI

Instead of asking, “What should I do?” ask:

“Help me slow down, understand what I am feeling, separate facts from assumptions, and choose a response that protects my peace and dignity.”

This one shift changes the role of AI. It stops being an emotional authority and becomes a reflection support. That is the safest foundation for the rest of this blog.

Use AI self reflection prompts when you feel confused, triggered, or emotionally overwhelmed and need a structured pause.

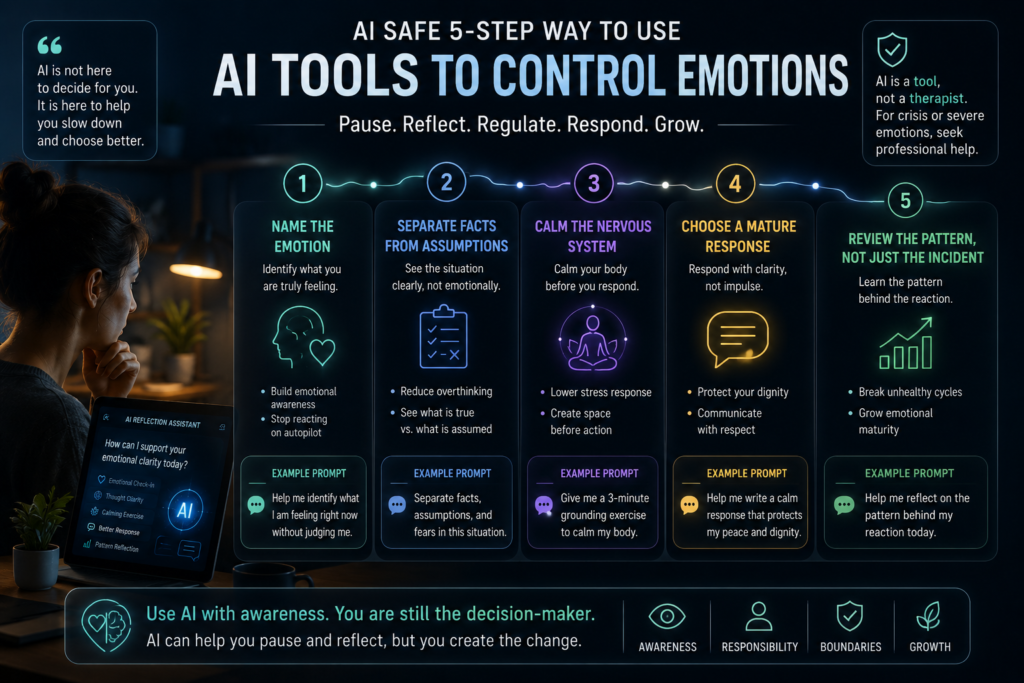

A Safe 5-Step Way to Use AI Tools to Control Emotions

AI becomes useful for emotional control only when it is used with the right intention. If the intention is to escape discomfort, prove yourself right, avoid a real conversation, or get quick validation for a reaction, AI can easily become another emotional dependency. But when the intention is to pause, reflect, calm the nervous system, and choose a better response, AI tools to control emotions can support real emotional maturity.

This is the practical difference most articles miss. AI should not become the place where you hand over your emotional authority. It should become a structured mirror that helps you return to awareness before you speak, text, argue, withdraw, or decide.

Step 1 — Name the Emotion Before Explaining the Story

The first mistake people make during emotional overwhelm is trying to explain the story before naming the emotion.

They say, “They disrespected me,” “Nobody cares,” “This always happens,” or “I cannot handle this anymore.”

These thoughts may feel true in the moment, but they are usually interpretations formed inside emotional activation.

Before asking AI what to do, the healthier step is to ask what you may be feeling. Is it anger, fear, shame, rejection, disappointment, guilt, loneliness, helplessness, or emotional exhaustion?

This matters because different emotions need different responses.

- Anger may need a boundary.

- Fear may need grounding.

- Shame may need self-compassion.

- Sadness may need support.

- Confusion may need time.

This is where AI self reflection prompts can become useful. They help the user slow down and name the emotional state without rushing toward a decision.

For readers who want a deeper safety angle on emotional validation, BBH also has a related guide on how to feel heard safely with AI.

For anger, anxiety, and overthinking, AI self reflection prompts can help turn emotional noise into clear next steps.

Example Prompt for Naming Emotions

Use this prompt when emotions feel mixed or confusing:

“Help me identify what I may be feeling right now. Do not tell me what to do yet. Ask me gentle questions and help me separate the emotion from the story.”

This prompt keeps AI in the role of reflection. It does not ask AI to decide your life. It asks AI to help you become more aware.

Step 2 — Separate Facts From Assumptions

After naming the emotion, the next step is separating facts from assumptions. This is important because emotional pain often becomes heavier when the mind treats assumptions as proof.

For example, “They did not reply” is a fact. “They do not care about me” is an assumption. “My boss gave short feedback” is a fact. “I am going to lose everything” is a fear-based prediction.

When the nervous system is activated, the mind tries to fill gaps quickly. It does this to protect you, but it can also create unnecessary suffering. This is why AI emotional regulation tools should be used to slow interpretation, not strengthen panic.

A good AI prompt can help you divide the situation into three parts: what happened, what you are assuming, and what else could be possible. This does not mean you ignore your feelings. It means you stop letting fear write the whole story.

Example Prompt for Reality Checking

Use this prompt before reacting to a message, conflict, or stressful thought:

“Separate this situation into facts, assumptions, fears, and possible balanced interpretations. Help me see where my emotion may be making the situation feel more certain than it really is.”

This kind of prompt can reduce impulsive replies, overthinking, and emotional spiraling.

Step 3 — Calm the Nervous System Before Responding

Emotional control is not only a thinking skill. It is also a body skill. When your nervous system is in threat mode, even logical advice may not work because the body still feels unsafe. The heart may race, breathing may become shallow, the chest may feel tight, the jaw may lock, and the mind may feel urgent.

This is why emotional regulation tools must include body-based calming. AI can support this by giving short grounding exercises, breathing prompts, sensory check-ins, and step-by-step calming routines. But the real change happens when the person practices the exercise, not just reads it.

A mature use of AI is not asking, “How do I win this argument?” A mature use is asking, “Help me calm my body before I respond.” That one shift can protect relationships, work decisions, family conversations, and personal peace.

For a connected BBH guide, readers can also explore AI emotional support tools for a calmer mind.

Example Prompt for Calming

Use this before replying, confronting, deciding, or sending a message:

“Give me a 3-minute grounding exercise to calm my nervous system before I respond. Keep it simple, practical, and focused on slowing my body first.”

This helps the user return from emotional urgency to conscious choice.

Step 4 — Choose a Mature Response

Once the emotion is named, the assumptions are separated, and the body is calmer, the next step is choosing a mature response. A mature response does not mean a weak response. It means a response that protects your dignity, communicates clearly, and does not create unnecessary damage.

Many people think emotional control means silence.

- But sometimes emotional control means speaking clearly without attacking.

- Sometimes it means delaying the conversation.

- Sometimes it means setting a boundary.

- Sometimes it means apologizing.

- Sometimes it means not replying until the nervous system is stable.

This is where AI tools to control emotions can help with language. AI can help rewrite a harsh message into a calm one.

- It can help reduce blaming words.

- It can help make a boundary clearer.

- It can help you say less, but say it with more maturity.

However, AI should not be used to manipulate people, sound perfect, or hide true responsibility.

- The goal is not polished emotional avoidance.

- The goal is honest communication with better self-control.

An AI chatbot for emotional support is safest when it encourages real-life action, professional help when needed, and healthy boundaries.

Example Prompt for Better Response

Use this when you want to respond without losing emotional balance:

“Help me write a calm, respectful response that expresses my point clearly without attacking, overexplaining, or abandoning my boundary.”

This prompt keeps your dignity at the center. It also prevents AI from becoming a tool for emotional performance.

Step 5 — Review the Pattern, Not Just the Incident

The deepest use of AI is not only solving one emotional moment. It is noticing repeated patterns. If the same kind of trigger keeps happening, the issue may not be only the current situation. It may be an emotional pattern connected to fear, rejection sensitivity, control, abandonment, shame, perfectionism, or old stress.

This is where AI self reflection prompts become powerful.

They can help you ask, “What pattern is repeating here?” instead of only asking, “What should I say now?” That question creates growth. It helps the reader move from emotional reaction to emotional learning.

For example, someone may realize they become defensive whenever they feel corrected. Another person may realize silence triggers abandonment fear. Someone else may notice they say yes too quickly because conflict feels unsafe.

This does not mean AI diagnoses you. It simply helps you reflect. The user must still stay responsible, seek human support when needed, and avoid turning AI into a substitute for therapy.

Example Prompt for Pattern Awareness

Use this after the emotional moment has passed:

“Help me reflect on the emotional pattern behind my reaction. What trigger, fear, belief, or unmet need might be repeating here? Keep the answer supportive but honest.”

This type of prompt supports emotional maturity because it turns the incident into learning.

An AI chatbot for emotional support is most helpful when it guides you to pause, name emotions, and return to real-life action instead of depending on the tool.

Do not use an AI chatbot for emotional support as your only support system during crisis, trauma, abuse, or severe emotional distress.

Best AI Prompts for Emotional Control

A strong way to use AI is to keep a small set of prompts ready before emotional overwhelm happens. When the nervous system is activated, it is hard to think clearly.

Having prepared prompts makes it easier to pause instead of react.

| Emotional Situation | AI Prompt | Purpose |

|---|---|---|

| Anger after a message | “Help me calm down before I reply. What should I not say while angry?” | Prevents impulsive reaction |

| Anxiety before a decision | “Separate realistic concerns from fear-based predictions.” | Reduces overthinking |

| Relationship hurt | “Help me express hurt without blaming or begging.” | Supports mature communication |

| Shame after a mistake | “Help me learn from this without attacking myself.” | Reduces self-criticism |

| Work stress | “Help me list what is urgent, what can wait, and what I can control.” | Creates mental structure |

| Family conflict | “Help me set a respectful boundary without escalating the situation.” | Protects dignity |

| Night overthinking | “Give me a short reflection and grounding routine before sleep.” | Supports calming |

These prompts work best when the user understands the boundary. AI can help organize emotion, but it cannot replace deep healing, human connection, therapy, or real-world action.

For readers comparing digital support and professional care, BBH’s guide on AI therapy tools and their risks is a useful next internal link.

Prompts for Anger, Anxiety, Overthinking, and Relationship Stress

Different emotions need different AI prompts. Anger needs slowing and boundary clarity.

Anxiety needs fact-checking and grounding. Overthinking needs structure. Relationship stress needs calm communication and emotional honesty.

For anger, ask AI: “Help me express this without disrespecting myself or the other person.”

For anxiety, ask: “What are three facts, three fears, and three small next steps?”

For overthinking, ask: “Help me stop looping and choose one practical action.”

For relationship stress, ask: “Help me say what I feel without blaming, chasing, or shutting down.”

These prompts are not magic solutions. They are emotional bridges. They help the user move from reaction to reflection, from urgency to awareness, and from emotional noise to a clearer next step.

This is the real value of AI tools to control emotions. They do not remove human pain. They help create a pause where wisdom can return.

Safety Note Before Using AI for Emotional Control

AI is helpful only when it supports self-awareness. It becomes risky when the user starts using it as the only emotional support system, the only decision-maker, or the only place where they feel heard.

This is especially important for readers in the USA, UK, Canada, and Australia, where AI mental health tools and emotional support chatbots are becoming more common but still require careful use.

Use AI for reflection, journaling, grounding, communication support, and emotional organization. Do not use it alone for crisis, abuse, self-harm thoughts, severe depression, panic episodes, addiction danger, or trauma flashbacks. In those moments, real human support and professional care matter.

The safest rule is simple: AI can help you pause, but your life should not depend only on AI. The best emotional control still comes from awareness, nervous system regulation, honest relationships, wise boundaries, and support when support is needed.

If AI self reflection prompts make you more aware, calm, and responsible, they are being used in a healthy way.

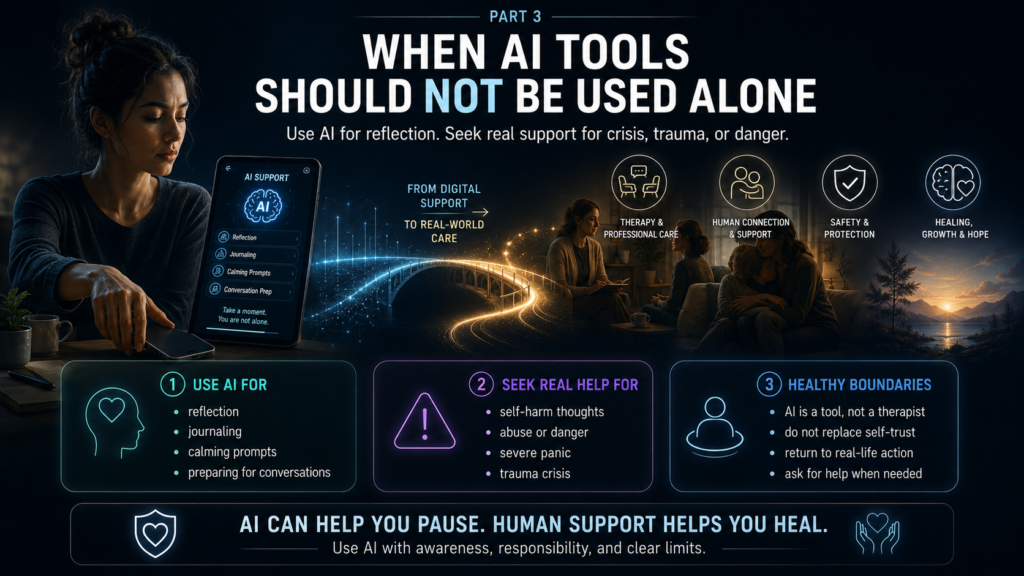

When AI Tools Should Not Be Used Alone

AI tools to control emotions can help with reflection, calming, journaling, and better communication, but they should not be used alone when the situation becomes serious, unsafe, or clinically complex.

This is one of the most important parts of the whole blog because responsible AI use is not only about what AI can do. It is also about knowing when AI is not enough.

If someone is dealing with self-harm thoughts, suicidal feelings, abuse, violence, severe panic, trauma flashbacks, addiction crisis, dangerous impulses, or a complete loss of control, AI should not be treated as the main support. In those moments, the person needs real human help, local emergency services, a crisis helpline, a doctor, or a qualified mental health professional.

This boundary matters especially for readers in the USA, UK, Canada, and Australia, where digital support tools are becoming more common. The safest message is simple: use AI for reflection, but do not use it to delay urgent care.

AI Is Not a Licensed Therapist

AI may sound supportive, but supportive language is not the same as licensed care. AI mental health tools can offer prompts, summaries, calming exercises, and emotional reflection, but they do not carry the same clinical responsibility as a trained professional.

They do not fully know your medical history, trauma background, relationship context, physical symptoms, medication situation, or safety risk.

This does not mean AI has no value.

- It means AI must remain in its correct place.

- It can help you organize your thoughts before therapy.

- It can help you prepare questions for a counselor.

- It can help you notice emotional patterns.

- It can help you calm down before a difficult conversation.

But AI should not become the only place where you bring your pain. Healing becomes stronger when digital support leads you back toward real life: better choices, healthier boundaries, professional care when needed, and honest human connection.

Clear Safety Rule

Use AI for reflection, not emergency care.

If your emotions feel dangerous, uncontrollable, or connected to self-harm, harm to others, abuse, or crisis, stop using AI as the main support and contact real help immediately.

A tool can support awareness, but it cannot replace human protection.

The best AI self reflection prompts build self-trust because they return the final decision back to you.

How to Use AI Without Becoming Emotionally Dependent on It

One hidden risk of AI tools to control emotions is emotional dependency. This can happen quietly. A person may start asking AI before every decision, every message, every conflict, every feeling, and every uncomfortable conversation. At first, this feels helpful. Over time, it can weaken self-trust.

The goal is not to make AI your emotional parent, therapist, judge, or best friend. The goal is to use AI as a temporary mirror that helps you return to yourself. A healthy user finishes an AI conversation with more clarity, not more dependency.

This is why the BBH approach is different from many generic AI articles.

The focus is not only “Which tool should you use?”

The deeper question is, “Is this tool making you more aware, more responsible, and more emotionally steady?”

If AI helps you pause and then take mature action, it is useful.

If AI helps you avoid life, avoid people, avoid therapy, avoid accountability, or avoid your own inner voice, it is becoming unhealthy.

Do Not Let AI Replace Self-Trust

Self-trust grows when you learn to listen to your emotions without being controlled by them. AI can support that process, but it cannot build self-trust for you. You still have to notice what you feel, choose what matters, accept consequences, and practice emotional responsibility.

A healthy prompt sounds like this: “Help me understand my emotion and possible options.”

An unhealthy prompt sounds like this: “Tell me exactly what to do because I cannot trust myself.”

That small difference matters. The first prompt builds awareness. The second prompt gives away authority. When a person gives away authority too often, they may feel calmer in the short term but weaker in the long term.

The purpose of emotional regulation is not to need a tool forever. The purpose is to slowly become more capable of pausing, thinking, feeling, and responding with maturity.

For deeper emotional growth, readers can begin from BBH’s support pathway: Start Here – Your Journey to Mental Clarity & Emotional Healing.

Do Not Use AI to Avoid Hard Conversations

Another risk is using an AI chatbot for emotional support to avoid real conversations. Someone may talk to AI for hours about a relationship conflict but never communicate honestly with the person involved.

- They may ask AI to validate their hurt but never set a boundary.

- They may rewrite messages endlessly but never address the deeper pattern.

AI can help prepare for a conversation, but it cannot replace the conversation.

- It can help you clarify what you feel, but it cannot repair trust for you.

- It can help you write a respectful boundary, but it cannot enforce that boundary in real life.

This is important in romantic relationships, family conflict, parenting, workplace stress, and friendships. Emotional maturity means learning to speak honestly without attacking, collapsing, chasing, or disappearing.

AI can help you choose words. You still have to choose courage.

A Healthy AI Emotional Control Routine

A healthy routine keeps AI limited, practical, and connected to real-life action. This prevents overuse and keeps the user in leadership. The best use of AI tools to control emotions is short, intentional, and structured.

Instead of opening AI every time you feel discomfort, create three fixed uses: a morning check-in, a trigger pause, and a night reflection. This keeps AI from becoming a constant emotional crutch while still allowing it to support awareness.

This also fits readers in high-pressure environments across the USA, UK, Canada, and Australia, where work stress, digital overload, loneliness, and anxiety often combine. AI can support a calmer emotional routine, but only if the user controls the tool instead of letting the tool control the emotional process.

For readers who want a related support pathway, BBH’s AI Therapy & Self-Help Tools page can guide them toward safer AI use.

Morning Check-In Prompt

Use AI for a short emotional check-in before the day becomes busy:

“Help me check in with my emotional state today. Ask me three simple questions about my mood, stress level, and one thing I can do to stay regulated.”

This prompt creates awareness before pressure builds. It helps the reader begin the day with emotional responsibility instead of waiting until stress becomes too strong.

Trigger Pause Prompt

Use this when a message, memory, argument, or situation activates you:

“I feel triggered. Help me pause before I react. Ask me what happened, what I am feeling, what I am assuming, and what response would protect my dignity.”

This is one of the most useful emotional regulation tools because it creates space between emotional activation and outward reaction.

Night Reflection Prompt

Use AI at night to close the day without overthinking:

“Help me reflect on today without self-criticism. What did I handle well, what emotional pattern did I notice, and what is one small improvement for tomorrow?”

This helps the user turn emotional moments into learning. It also prevents the mind from carrying unresolved emotional noise into sleep.

How AI Can Support Emotional Maturity

Emotional maturity is not the absence of strong feelings. It is the ability to feel something deeply without allowing that feeling to destroy your words, choices, relationships, or self-respect. AI can support this maturity when it helps the reader slow down.

Good AI use asks better questions. It does not simply say, “You are right.”

It helps the person ask,

- “What is true?

- What is fear?

- What is my responsibility?

- What boundary is needed?

- What response matches my values?”

This is where AI self reflection prompts become more useful than quick advice. A prompt that builds maturity does not push the person into instant action. It invites them into awareness.

For example, after a conflict, a helpful prompt is:

“Help me understand what part of my reaction was protective, what part was excessive, and what I can do differently next time.”

That is emotional learning. That is where AI becomes a mirror, not a master.

What Makes This BBH Approach Different

Most articles about AI and emotions focus on apps, features, chatbots, or benefits.

This blog takes a more mature position.

- It does not ask readers to trust AI blindly.

- It teaches them how to use AI with self-awareness, emotional responsibility, nervous system regulation, and clear safety limits.

That is the unique BBH value. The reader is not only learning about AI emotional regulation tools. They are learning how to build a healthier relationship with their own emotions.

AI may help you name the feeling. But you still need courage to face the truth.

AI may help you write a calm message. But you still need humility to send it wisely.

AI may help you notice a pattern. But you still need discipline to change that pattern.

This is where technology and inner work meet. The tool gives structure. The human brings awareness.

For related emotional growth, readers can also study how detachment helps control emotions.

Final BBH Takeaway

AI tools to control emotions can be helpful when they create a pause between trigger and reaction. They can help you name emotions, separate facts from assumptions, calm your nervous system, reflect on patterns, and choose a more mature response. But AI should never replace therapy, crisis support, human connection, or your own responsibility.

The healthiest use of AI is not emotional dependency. It is emotional leadership.

- You use the tool, then return to your life with more clarity.

- You ask better questions, then make better choices.

- You calm your nervous system, then speak with more dignity.

For readers in the USA, UK, Canada, and Australia, this matters because digital support is growing quickly, but emotional safety still needs wisdom. AI can help you slow down. It can help you reflect. It can help you organize your mind.

But the final goal is not to become attached to AI.

The final goal is to become more conscious, more grounded, and more capable of responding from your values instead of your wounds.

People Also Ask

1. Can AI tools help control emotions?

Yes, AI tools to control emotions can help you pause, name feelings, separate facts from assumptions, and choose a calmer response. They work best as reflection tools, not as replacements for therapy or human support.

2. Are AI emotional regulation tools safe?

AI emotional regulation tools can be safe for journaling, grounding, and self-reflection when used carefully. For crisis, self-harm thoughts, abuse, or severe symptoms, real human and professional support is necessary.

3. Can an AI chatbot for emotional support replace therapy?

No, an AI chatbot for emotional support should not replace a licensed therapist. APA warns that AI chatbots and wellness apps need stronger safety protections for unmet mental health needs. (American Psychological Association)

4. What are the best AI prompts for emotional control?

The best prompts ask AI to help you slow down, name the emotion, separate facts from fears, calm the body, and prepare a respectful response. Avoid prompts that ask AI to decide your life for you.

5. When should I stop using AI and seek real help?

Stop using AI alone if emotions feel dangerous, uncontrollable, or connected to self-harm, abuse, panic, trauma flashbacks, addiction crisis, or severe depression. NIMH notes that mental health apps have potential, but there is still uncertainty and limited regulation. (National Institute of Mental Health)

FAQ

1. What are AI tools to control emotions?

They are digital tools, chatbots, or prompts that help users reflect, calm down, journal, and organize emotions. They should support awareness, not replace personal judgment or professional care.

2. How can AI mental health tools help with anxiety?

AI mental health tools can help users organize anxious thoughts, identify fear-based assumptions, and practice grounding prompts. They are most useful for mild stress support, not emergency mental health care.

3. Are AI self reflection prompts useful?

Yes, AI self reflection prompts can help users understand emotional patterns, triggers, and repeated reactions. They are useful when they lead to real-life awareness, boundaries, and healthier action.

4. Can AI give wrong emotional advice?

Yes, AI can give incomplete, inaccurate, or overconfident advice, especially in complex mental health situations. WHO has warned that many generative AI tools used for emotional support were not designed or tested for mental health care. (World Health Organization)

5. Should I use AI before talking to a therapist?

You can use AI to organize your thoughts, list questions, or prepare notes before therapy. But AI should not delay therapy when you need professional help.

External References

- American Psychological Association — Health Advisory on AI Chatbots and Wellness Apps

URL: https://www.apa.org/topics/artificial-intelligence-machine-learning/health-advisory-chatbots-wellness-apps - National Institute of Mental Health — Technology and the Future of Mental Health Treatment

URL: https://www.nimh.nih.gov/health/topics/technology-and-the-future-of-mental-health-treatment - World Health Organization — Responsible AI for Mental Health and Well-Being

URL: https://www.who.int/news/item/20-03-2026-towards-responsible-ai-for-mental-health-and-well-being–experts-chart-a-way-forward - FDA — Software as a Medical Device

URL: https://www.fda.gov/medical-devices/digital-health-center-excellence/software-medical-device-samd