AI Therapy Tools: Benefits, Risks, Online Counseling Support

How AI Therapy Tools Support Mental Health Without Replacing Human Therapists

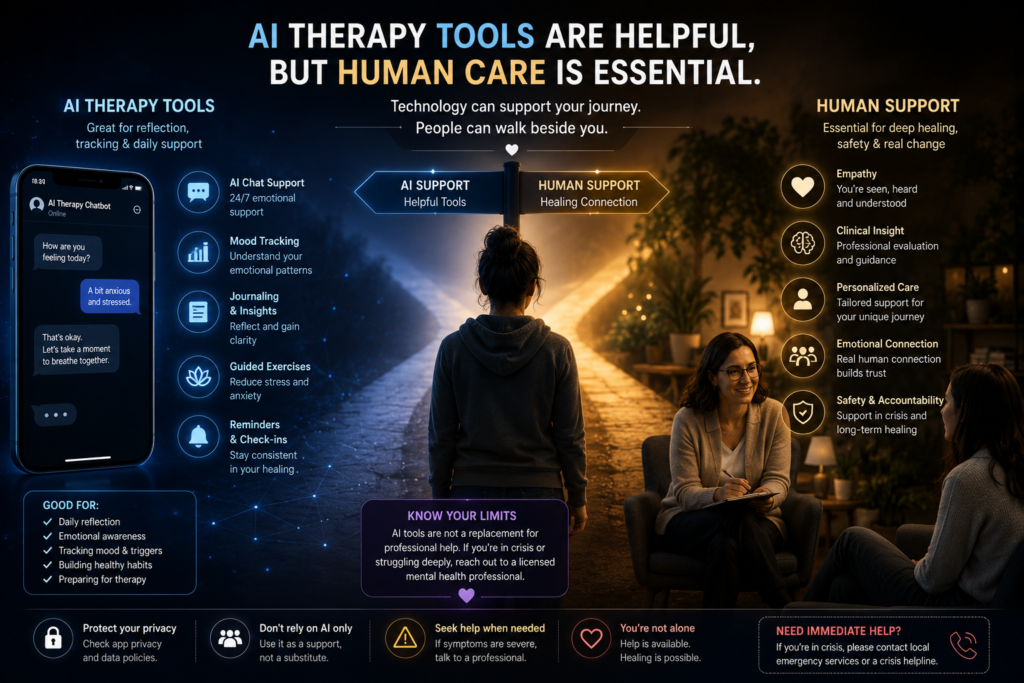

AI therapy tools are becoming popular because many people need emotional support before they feel ready for therapy, but most articles only explain apps, features, or future technology.

Thank you for reading this post, don't forget to subscribe!This blog goes deeper. It shows how AI therapy tools, AI mental health apps, online counseling, hybrid therapy, and an AI therapy chatbot can support emotional awareness without replacing human care.

Readers in the USA, UK, Canada, and Australia will understand the real difference between safe support and risky dependence.

The unique value of this blog is its BBH angle: technology is not treated as a therapist, but as a bridge that helps people name emotions, track patterns, prepare for counseling, and regulate the nervous system between sessions.

This article will help readers make smarter, safer decisions about digital mental health support while remembering one essential truth: healing should remain human-led, ethical, and emotionally grounded.

AI therapy tools are becoming a serious part of modern mental health support, especially in the USA, UK, Canada, and Australia, where many people face long therapy waiting times, high costs, emotional hesitation, and the private fear of speaking openly about what they are going through.

The existing blog already covers AI therapy, online counseling, hybrid therapy, and mental health support, so this rewrite should strengthen those ideas with clearer search intent and safer BBH authority framing.

The unique value of this blog is simple: it does not treat AI as a magic therapist, and it does not reject technology out of fear.

Instead, it explains how AI therapy tools can support emotional awareness, prepare someone for online counseling, and become part of a safer hybrid therapy model when used with human judgment.

Readers will understand what these tools can do, what they cannot do, and when professional help is still necessary.

What Are AI Therapy Tools and Why Are They Growing?

AI therapy tools are digital mental health tools that use artificial intelligence to support emotional reflection, journaling, mood tracking, coping prompts, chatbot conversations, and therapy preparation.

Some tools work like AI mental health apps, while others function as an AI therapy chatbot that responds to emotional concerns in a conversational way. These tools are growing because people want support that feels private, fast, affordable, and available outside normal therapy hours.

But the most important point is this: AI therapy tools should be seen as support systems, not human replacements.

- They may help someone name their feelings, notice patterns, prepare questions before online counseling, or reflect after a difficult day.

- They may also help users track anxiety, stress, sleep, mood changes, and emotional triggers.

- However, they do not carry the clinical responsibility, emotional depth, or ethical judgment of a trained therapist.

For BBH readers, the deeper meaning is that technology can sometimes create the first safe pause between emotional pain and impulsive reaction. When a person feels overwhelmed, confused, or ashamed, even a small moment of reflection can become the beginning of healing.

Read Also: AI mental health tools and emotional pain mapping

Why People Are Turning to AI Mental Health Apps

People are not turning to AI mental health apps only because technology is new. Many are turning to them because they feel emotionally stuck.

- Some people do not know how to describe their pain.

- Some feel embarrassed to speak to a therapist.

- Some are waiting for online counseling but need support today.

- Others live in places where professional help is expensive, delayed, or difficult to access.

In the USA, UK, Canada, and Australia, this matters because mental health support is not always immediate. A person may understand that they need help, but still feel afraid to book a session.

They may ask, “Is my problem serious enough?” or “What if I cannot explain it properly?” AI therapy tools can sometimes help during this early stage by giving users a private space to write, reflect, and understand what they are feeling before they speak to a professional.

The emotional reason behind this search is often not just therapy. It is the need to feel heard without judgment.

Read Also: AI Emotional Support: Feel Heard Safely

For many people, the first need is not advice, but emotional validation — the feeling that their pain is seen without judgment, which is why AI Emotional Support: Feel Heard Safely connects strongly with the larger discussion around AI therapy tools.

The Real Emotional Need Behind AI Therapy Tools

The real reason many people search for AI therapy tools is not because they want a machine to replace a therapist.

- They search because they want a safe first step.

- They may be anxious, lonely, ashamed, confused, or emotionally overloaded.

- They may want to understand whether their reaction is normal, whether their sadness matters, or whether their anxiety is becoming too heavy.

This is where AI can help in a limited but meaningful way.

- An AI therapy chatbot may ask reflective questions.

- A therapy app may suggest journaling.

- An emotional support app may help someone track mood patterns.

These small actions can help a person move from emotional chaos into clearer self-awareness.

But this support must stay grounded. AI mental health apps should never be treated as a diagnosis tool, emergency service, or full replacement for online counseling. The healthiest approach is to use them as preparation, reflection, and support between real human care.

“Sometimes healing begins before a person finds the right words. A safe first step can help the heart admit what it has been carrying.”

Read Also: CBT AI and shame recovery

Can AI Therapy Tools Help Before Online Counseling?

Yes, AI therapy tools can help before online counseling when they are used carefully. Many people enter therapy feeling unprepared. They may not know what to say, where to begin, or how to explain their emotional history. AI-supported journaling, mood tracking, and guided reflection can help users organize their thoughts before speaking with a counselor or therapist.

For example, someone may use a digital mental health tool to track when anxiety becomes stronger, what triggers emotional shutdown, or what thoughts appear before panic. This can make online counseling more focused because the person arrives with clearer examples instead of only saying, “I feel bad all the time.”

This is also where hybrid therapy becomes important. Hybrid therapy means using technology and human support together.

AI may help with daily reflection, while a trained therapist helps with deeper interpretation, trauma safety, diagnosis, and treatment planning. That balance is safer than depending only on a chatbot.

Read Also: AI apps for anxiety relief

What AI Therapy Tools Should Never Do

AI therapy tools should never replace emergency care, crisis support, medical diagnosis, trauma therapy, or a licensed mental health professional.

If someone is having thoughts of self-harm, severe depression, panic attacks, abuse, addiction, psychosis symptoms, or an inability to function in daily life, they need real professional support, not only an AI therapy chatbot.

This safety point is especially important for readers in the USA, UK, Canada, and Australia because mental health systems, privacy laws, and therapy access differ across countries. A tool may be helpful for reflection, but it cannot fully understand a person’s culture, history, risk level, body language, or clinical needs.

The safest BBH message is this: AI can support awareness, but human care must lead healing when pain becomes deep, dangerous, or complex.

Read Also : AI emotional intelligence and mental health

Part 1 Closing Bridge

AI therapy tools are useful when they help people pause, reflect, prepare, and feel less alone. They become risky when people treat them as a full replacement for trained human care.

The best future is not AI instead of therapy. The best future is ethical AI support combined with online counseling, human judgment, emotional education, and safer hybrid therapy.

In Part 2, we will go deeper into the real benefits of AI therapy tools, how hybrid therapy works, and how AI mental health apps may support nervous system regulation without pretending to replace human connection.

Benefits, Hybrid Therapy, and Nervous System Support

Key Benefits of AI Therapy Tools for Emotional Support

AI therapy tools are useful when they help a person understand what is happening inside them before emotions become too heavy to manage.

Many people do not immediately know whether they are anxious, ashamed, lonely, overstimulated, burned out, or emotionally numb. They only know that something feels wrong.

This is where digital mental health tools can create a first layer of emotional clarity. Instead of keeping every feeling trapped in the mind, a person can write, track, reflect, and begin noticing repeated patterns.

For readers in the USA, UK, Canada, and Australia, this matters because mental health support is often delayed by cost, availability, location, or personal hesitation.

AI mental health apps may offer small daily check-ins, mood tracking, guided journaling, breathing prompts, and emotional support between therapy sessions. They are not a final solution, but they can reduce the gap between “I am struggling” and “I am ready to ask for help.”

Emotional Tracking Helps People Notice Patterns Earlier

One strong benefit of AI therapy tools is emotional tracking. A person may believe their anxiety appears randomly, but after tracking mood, sleep, screen time, conflict, work pressure, or loneliness, patterns often become clearer.

For example, anxiety may rise after poor sleep, emotional avoidance, social comparison, or constant news consumption. When those patterns are visible, the person can take better action.

This connects well with the BBH idea of emotional awareness. Healing becomes easier when the mind stops treating every feeling as a mystery.

A digital tool may help someone notice, “I feel worse every Sunday evening,” or “I become overwhelmed after scrolling late at night.”

That kind of insight can make online counseling more useful because the therapist receives clearer examples instead of vague emotional confusion.

Read Also : How AI can support emotional pain mapping

Why Pattern Awareness Matters for Anxiety and Overwhelm

Anxiety often feels stronger when the nervous system cannot identify what is threatening it. Pattern awareness gives the brain a sense of structure.

When someone can see that their anxiety increases after certain thoughts, habits, relationships, or environments, the emotion becomes easier to work with. It does not mean the anxiety disappears immediately, but it becomes less confusing.

This is one reason AI mental health apps can support people before or between therapy sessions. They may help users record triggers, rate emotional intensity, and observe how coping tools affect mood.

This makes the person more prepared for online counseling, especially if they struggle to explain their feelings clearly in the moment.

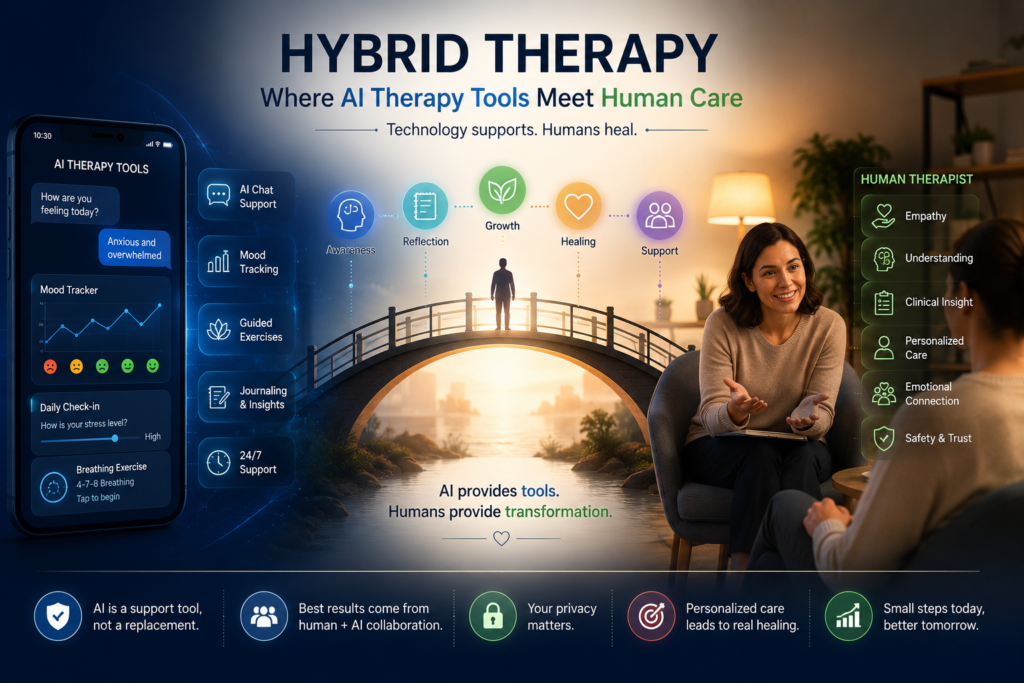

How Hybrid Therapy Combines Technology and Human Care

Hybrid therapy is one of the safest and most realistic ways to think about the future of mental health support. It does not say AI should replace therapists. It says technology can support reflection, journaling, emotional tracking, and daily coping while trained professionals remain responsible for deeper care, diagnosis, trauma work, treatment planning, and crisis safety.

This distinction is important because many people search for AI therapy tools with hope, but also with confusion. They may wonder whether an AI therapy chatbot can become their therapist.

The answer should be clear: AI can support awareness, but human care must lead healing when emotions are complex, dangerous, traumatic, or long-term.

Hybrid therapy protects this balance by using technology as a helper, not as the main authority.

For BBH, this is the strongest angle. The future should not be cold digital therapy. The future should be human-led healing supported by ethical tools that make emotional care more accessible, organized, and consistent.

Therapist Plus AI Support Between Sessions

A therapist may meet someone once a week, but emotional life continues every day. Stress, conflict, loneliness, panic, overthinking, and self-doubt do not wait for the next appointment.

AI-assisted therapy tools may help users record what happened between sessions, track emotional changes, prepare questions, and remember coping practices.

This can make online counseling more effective. Instead of spending half the session trying to remember the week, the person may arrive with notes, emotional patterns, and clearer examples. This can help the therapist understand what is happening in real life, not only what the person remembers under pressure.

Read Also : AI apps for anxiety relief

Why Hybrid Therapy Is Safer Than Depending Only on Chatbots

An AI therapy chatbot can respond quickly, but it cannot fully understand human history, body language, cultural background, trauma depth, or clinical risk. A chatbot may sound supportive, but it does not hold the same responsibility as a licensed therapist. That is why hybrid therapy is safer than depending only on a mental health chatbot.

The healthiest model is simple: AI can help with reflection and preparation, while human professionals handle interpretation, diagnosis, crisis care, and deeper treatment.

This gives people support without pretending that technology can replace human wisdom.

AI Therapy Tools and Nervous System Regulation

AI therapy tools can also support nervous system regulation when they guide users toward small calming actions. Many people do not need a complicated solution in the first moment of overwhelm.

They need a pause, a breath, a reminder, a journal prompt, or a simple grounding step that helps the body move out of threat mode.

This is where BBH can make the article stronger than normal AI app list blogs. Most articles discuss features, but BBH should explain the human mechanism underneath.

- A reminder to breathe is not powerful because it is digital.

- It is useful because it interrupts automatic stress.

- A mood tracker is not healing by itself.

- It becomes helpful when it teaches the person to observe their emotional rhythm instead of becoming completely controlled by it.

For anxious, burned-out, or emotionally overloaded readers, nervous system support should be presented as practical, gentle, and human-centered.

Reminders, Journaling, and Breathing Prompts

AI mental health apps often include reminders, journaling prompts, mindfulness practices, breathing exercises, and check-ins. These tools may seem small, but they can help a person return to the present moment.

When someone is caught in overthinking, a guided prompt can help them ask, “What am I actually feeling?” or “What do I need right now?”

This is not a replacement for professional support. It is a daily regulation aid. For someone waiting for online counseling, or someone already in hybrid therapy, these small practices can keep emotional healing active between sessions.

Read Also: Brain Health → Nervous System Regulation

Calm Support Is Helpful, But It Is Not Emergency Care

AI therapy tools can support calm, but they should never be used as emergency care. If a person feels unsafe, has thoughts of self-harm, is experiencing severe depression, abuse, addiction, panic attacks, or loss of daily functioning, they need professional and immediate human support.

This safety message is essential for Google trust and for reader protection.

The article should make it clear that AI can support emotional awareness, but it cannot carry clinical responsibility.

The safest future is not “AI instead of therapy.” The safest future is ethical AI support inside a human-led care system.

Part 2 Closing Bridge

The real benefit of AI therapy tools is not that they replace human care. Their value is that they can help people notice emotional patterns, prepare for online counseling, support daily regulation, and stay connected to healing between sessions. When used within hybrid therapy, technology becomes a bridge instead of a false solution.

In Part 3, the article should become more safety-focused: risks of AI therapy chatbots, privacy concerns, emotional dependence, when AI is not enough, and why human-led support must remain the foundation of mental health care.

Risks of AI Therapy Chatbots and Mental Health Apps

AI therapy tools can be helpful, but they also need clear boundaries. Many people feel emotionally attached to fast responses, gentle language, and private support, especially when they feel lonely, anxious, ashamed, or unheard.

This is where an AI therapy chatbot can feel comforting, but comfort is not the same as clinical care. A chatbot may respond with empathy-like language, but it does not truly know a person’s full history, body language, family background, trauma depth, medical condition, or real-time safety risk.

This is why readers in the USA, UK, Canada, and Australia need a balanced view. AI mental health apps may support journaling, mood tracking, coping reminders, and emotional reflection, but they should not become the only place a person brings serious pain.

When emotional suffering becomes intense, repeated, unsafe, or connected to trauma, abuse, addiction, self-harm thoughts, or daily functioning problems, human professional care becomes necessary.

Privacy, Emotional Dependence, and Wrong Advice Risks

There are three major risks with digital mental health tools: privacy risk, emotional dependence, and wrong guidance. Privacy matters because users may share sensitive emotions, relationship conflicts, trauma memories, health details, or personal fears. Before using any AI therapy chatbot, users should check whether the platform explains how data is stored, used, protected, or shared.

Emotional dependence is another risk. If a person begins using AI as their only source of reassurance, they may avoid real conversations, delay online counseling, or become less comfortable with human vulnerability. This can slowly turn support into avoidance.

Wrong advice is also possible. AI may misunderstand context, give general suggestions, or respond without recognizing clinical risk. That does not mean every tool is harmful. It means users should treat AI mental health apps as support tools, not final authorities.

Why Human Judgment Still Matters

Human judgment matters because healing is not only about information.

A trained therapist can notice risk, silence, emotional conflict, trauma patterns, avoidance, body language, and hidden distress.

Online counseling also gives a person something AI cannot fully provide: a real therapeutic relationship.

This is why hybrid therapy is stronger than chatbot-only support. AI can help organize thoughts, but a human professional helps interpret them safely.

When AI Therapy Tools Are Not Enough

AI therapy tools are not enough when emotional pain becomes dangerous, severe, confusing, or impossible to manage alone.

A person should not depend only on an AI therapy chatbot if they feel unsafe, have thoughts of self-harm, experience severe depression, panic attacks, trauma flashbacks, abuse, addiction, psychosis symptoms, or repeated inability to work, sleep, eat, communicate, or function normally.

This section is very important for SEO and trust because mental health content needs a clear safety boundary.

The blog should never suggest that AI mental health apps can treat serious mental health conditions alone. They can support reflection, but they cannot diagnose, prescribe, assess crisis risk properly, or replace emergency care.

For readers in the USA, UK, Canada, and Australia, the safest message is simple: use AI therapy tools for preparation, journaling, emotional tracking, and self-awareness, but seek licensed professional help when symptoms become intense or long-lasting.

Warning Signs That Need Professional Help

Professional help may be needed when someone feels emotionally unstable for more than a few weeks, avoids basic responsibilities, loses interest in life, feels trapped in panic, struggles with trauma memories, becomes dependent on substances, feels unsafe in a relationship, or has thoughts of harming themselves. These are not moments for AI-only support.

Online counseling can be a strong first step when in-person therapy feels difficult.

A person can speak from home, prepare notes in advance, and use emotional tracking from AI therapy tools to explain what has been happening. This makes technology useful, but still secondary to human care.

Read Also: AI Therapy & Self-Help Tools

AI Can Support Awareness, But It Cannot Hold Clinical Responsibility

AI can ask questions, suggest breathing, summarize thoughts, and encourage journaling. But it cannot carry clinical responsibility for someone’s mental health. This is the core difference between support and treatment.

Hybrid therapy respects that boundary. It allows digital support to help with daily reflection while human professionals remain responsible for deeper care, diagnosis, trauma work, and safety planning.

Final Takeaway: The Future Is AI-Supported, Human-Led Healing

The safest future of mental health is not AI replacing therapy. It is AI-supported, human-led healing.

AI therapy tools can help people pause, journal, track emotions, notice patterns, prepare for online counseling, and stay connected to small regulation habits between sessions. But when emotional pain becomes deep, risky, traumatic, or long-term, human care must lead.

This is the most important message of the blog. Technology can open the first door, but healing still needs truth, safety, relationship, and professional responsibility.

AI mental health apps may help someone feel less alone in the first moment of struggle, but they should guide the person toward stronger self-awareness and appropriate support, not away from it.

“Technology can open the door, but healing still needs truth, safety, and the courage to stay connected with yourself.”

The Best Use of AI Is Preparation, Reflection, and Support

The best use of AI therapy tools is not to replace a therapist. Their best use is preparation, reflection, and support. A person may use them to write down symptoms, understand emotional triggers, practice grounding, organize thoughts, or prepare for online counseling.

This makes therapy more focused and less frightening. Instead of arriving confused and overwhelmed, the person may arrive with clearer emotional examples.

That is where hybrid therapy becomes powerful: AI supports the daily space between sessions, while human care protects the deeper healing process.

Read Also: AI life purpose coach

A Safer Way to Think About Mental Health Technology

A safer way to think about an AI therapy chatbot is this: it can be a reflection tool, not a therapist; a support bridge, not a diagnosis system; a preparation space, not a replacement for care.

When readers understand this boundary, they can use digital mental health tools more wisely.

This balanced view gives the blog a stronger BBH identity than common AI articles. It respects innovation while protecting human dignity.

Final Reader Guidance

Readers should use AI therapy tools with awareness, not blind trust.

They should choose platforms carefully, protect private information, avoid depending only on chatbots, and seek real professional support when symptoms become serious.

For many people, the first small step may be journaling, emotional tracking, or speaking through an app. But the long-term goal should always be deeper self-understanding, healthier relationships, and safer human support.

Read Also: Start Here – Your Journey to Mental Clarity & Emotional Healing

AI therapy tools can help people begin, but they should not become the whole path. The strongest healing model is not technology alone. It is ethical AI support, responsible online counseling, safe hybrid therapy, and human-led emotional recovery.

People Also Ask

1. What are AI therapy tools?

AI therapy tools are digital mental health tools that use artificial intelligence to support journaling, emotional reflection, mood tracking, coping prompts, and therapy preparation. They can help users understand emotions, but they should not replace licensed mental health care.

2. Can AI therapy tools replace a human therapist?

No, AI therapy tools should not replace a human therapist. They may support reflection and preparation, but diagnosis, trauma care, crisis safety, and treatment planning need trained human professionals.

3. Are AI mental health apps safe to use?

AI mental health apps may be useful for mild support, journaling, and emotional tracking, but safety depends on privacy, clinical quality, and how the user uses them. They should not be used as emergency care or as the only support for serious symptoms.

4. How can AI help with online counseling?

AI can help users prepare for online counseling by tracking moods, recording triggers, organizing thoughts, and helping them explain symptoms more clearly. This can make therapy sessions more focused and easier to begin.

5. What is hybrid therapy?

Hybrid therapy combines digital support with human care. AI may help with reflection and daily emotional tracking, while a therapist provides professional judgment, deeper interpretation, treatment planning, and safety support.

FAQ

1. What is the main benefit of AI therapy tools?

The main benefit of AI therapy tools is that they help users pause, name emotions, track patterns, and prepare for professional support. They are best used as emotional support bridges, not full therapy replacements.

2. Can an AI therapy chatbot help with anxiety?

An AI therapy chatbot may help with basic reflection, breathing prompts, journaling, and anxiety tracking. However, severe anxiety, panic attacks, trauma symptoms, or daily functioning problems need professional mental health care.

3. Is online counseling better than AI therapy apps?

Online counseling is usually stronger for serious or ongoing mental health concerns because it involves a trained professional. AI therapy apps may support daily reflection, but they cannot replace clinical judgment.

4. Who should avoid depending only on AI therapy tools?

Anyone experiencing self-harm thoughts, severe depression, abuse, addiction, trauma flashbacks, psychosis symptoms, or inability to function should not depend only on AI therapy tools. They should seek immediate human support.

5. What is the safest way to use AI mental health apps?

The safest way is to use AI mental health apps for journaling, mood tracking, emotional awareness, and preparation for therapy. Users should protect privacy, avoid overdependence, and seek professional help when symptoms are serious.

External Reference

National Institute of Mental Health — Technology and the Future of Mental Health Treatment

National Institute of Mental Health — What Is Telemental Health?

American Psychological Association — AI Chatbots and Wellness Apps Advisory

World Health Organization — Ethics and Governance of Artificial Intelligence for Health

U.S. Food & Drug Administration — Artificial Intelligence and Machine Learning in Software as a Medical Device