AI Mental Health Detection: Can AI Spot Early Warning Signs?

Can AI Detect Mental Health Problems Safely?

AI mental health detection is no longer only a future idea; it is already becoming part of how people understand stress, depression, anxiety, emotional overload, and crisis risk. But the real question is not only “can AI detect mental health?”

Thank you for reading this post, don't forget to subscribe!The deeper question is whether AI can notice early warning signs safely, without replacing human care or risking private emotional data.

This blog is important because readers in the USA, UK, Canada, and Australia are increasingly using AI mental health tools before speaking to a therapist or doctor. Here, you will understand how AI detecting depression and anxiety may work through language, voice, sleep, behavior, and wearable signals.

You will also learn why AI mental health privacy matters, where AI mental health crisis prediction has limits, and why BBH explains this topic through psychology, nervous system awareness, safety, and practical human care.

What Is AI Mental Health Detection?

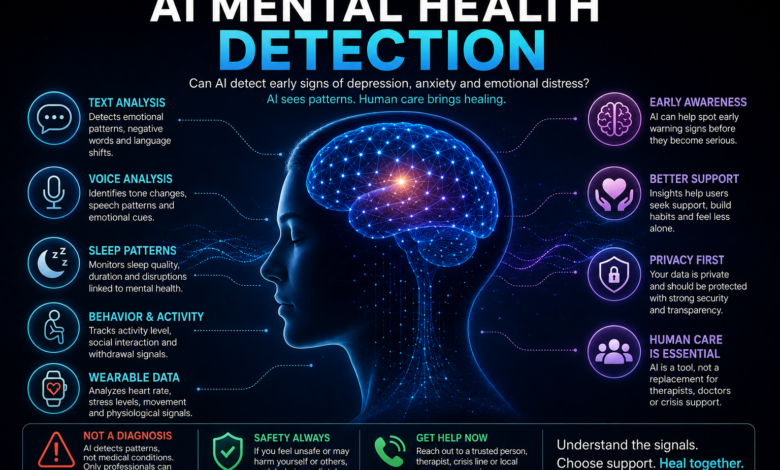

AI mental health detection means using artificial intelligence to notice possible emotional, behavioral, language, sleep, or stress patterns that may be linked with mental health concerns.

- It does not mean that AI can look inside a person’s mind and fully understand their pain.

- It also does not mean that an app, chatbot, or digital tool can replace a trained therapist, psychologist, psychiatrist, or doctor.

This distinction is important because many people hear the word “detection” and assume it means diagnosis. In reality, AI mental health detection is more like pattern recognition.

AI may notice changes in writing style, voice tone, sleep rhythm, social withdrawal, emotional words, or repeated distress signals. These patterns may suggest that someone is struggling, but they are not enough to confirm depression, anxiety, trauma, or crisis risk by themselves.

For readers in the USA, UK, Canada, and Australia, this topic matters because digital mental health tools are becoming part of everyday life.

People are using chatbots, wellness apps, online therapy platforms, and self-help tools before they ever speak to a professional. That can be helpful, but only if people understand what AI can do safely and what it should never claim to do.

A better way to understand AI is this: AI can notice signals, but it cannot fully understand the human story behind those signals.

How AI Looks for Mental Health Patterns

AI tools usually work by studying data. That data may come from text, voice, app activity, sleep patterns, wearable devices, questionnaires, or behavior changes.

For example, if someone starts using more hopeless language, sleeps irregularly, stops communicating, or searches repeatedly for emotional crisis topics, AI may identify those patterns as possible signs of emotional distress.

This is why can AI detect mental health is such a serious question. The answer is not a simple yes or no. AI may detect certain patterns connected to mental health, but it cannot know the full context of a person’s life.

A person may sound low because they are tired, grieving, overworked, physically unwell, or emotionally overwhelmed. AI can notice the signal, but human judgment is still needed to understand the meaning.

Text, Voice, Sleep, Behavior, and Wearable Signals

Some AI systems analyze written language. They may look for repeated negative words, hopeless phrases, emotional intensity, confusion, or withdrawal.

Other tools analyze voice patterns, such as slower speech, long pauses, low energy tone, or reduced emotional range. Wearable-based tools may look at sleep disruption, heart rate changes, activity reduction, or stress markers.

These signals can be useful when used carefully. For example, someone who is slowly losing sleep, withdrawing socially, and using more hopeless language may benefit from early support. This is where AI may help by encouraging reflection, journaling, or professional help.

Internal support tools such as AI emotional support can help people feel heard safely can be helpful when used as emotional support, not as medical certainty.

But AI should not turn every signal into fear.

- A stressful week does not always mean illness.

- A sad message does not always mean depression.

- A quiet voice does not always mean crisis.

This is why safe AI use must always include privacy, context, and human review.

Can AI Detect Mental Health Problems Before They Become Serious?

AI may help detect early warning signs when patterns repeat over time. For example, if someone’s sleep worsens, emotional language becomes darker, motivation drops, and social communication reduces, AI may notice that the person’s emotional state is changing.

This does not prove a diagnosis, but it may help the person pause and ask, “What is happening to me?”

That pause can be valuable. Many people do not recognize their emotional decline until symptoms become heavy.

They may normalize stress, ignore anxiety, hide sadness, or keep working while their nervous system is overloaded. In that way, AI mental health tools may help people notice patterns earlier.

What AI May Notice Early

AI may notice changes that people often ignore. These can include repeated negative self-talk, increased emotional reactivity, sleep disturbance, lower activity, changes in communication, or more intense worry patterns.

This connects strongly with AI detecting depression and anxiety, because depression and anxiety often show up through patterns before a person names them clearly.

For depression, AI may identify language linked with hopelessness, sadness, isolation, low motivation, or self-criticism. For anxiety, it may notice worry loops, repeated reassurance-seeking, fear-based questions, sleep problems, or stress-related body signals. But again, these are risk signals, not final answers.

This is why internal reflection tools like AI tools to control emotions can support people by helping them pause before reacting. AI can become useful when it helps a person slow down, label emotions, and choose a safer next step.

Mood Changes, Sleep Disruption, Language Shifts, and Social Withdrawal

The biggest strength of AI is that it can track repeated patterns over time.

- A human may forget how often they felt overwhelmed last month.

- A tool may notice a trend.

- A person may not realize their sleep has been unstable for weeks.

- A wearable may show that the pattern is repeating.

Someone may think they are “just tired,” while their writing and behavior show deeper distress.

This is where AI mental health tools can support awareness. They may help people recognize emotional drift before it becomes a crisis. However, the tool must be designed with safety. It should not shame the user, create panic, or pretend to know more than it knows.

A strong AI mental health system should say: “These patterns may suggest stress or emotional difficulty.

Consider talking to a qualified professional or trusted support person.”

It should not say: “You definitely have depression” or “You are about to have a crisis.”

What AI Cannot Do in Mental Health Care

AI cannot diagnose mental illness by itself. It cannot understand childhood history, family pressure, culture, grief, trauma, physical illness, financial stress, relationship pain, spiritual confusion, or the silent meaning behind a person’s emotional state.

It may process data, but it does not possess human empathy, clinical responsibility, or lived understanding.

This is especially important in mental health because people are not only data patterns. A person’s pain has context.

Their nervous system may react based on past wounds, current pressure, emotional neglect, burnout, or fear.

AI may detect distress language, but a trained human professional is needed to understand whether that distress is linked to anxiety, depression, trauma, grief, medical issues, or temporary stress.

That is why AI therapy tools have benefits and risks. They may support reflection, emotional organization, and early awareness, but they should never become the only source of care when symptoms are serious.

AI Cannot Diagnose You Like a Licensed Professional

A licensed professional does more than collect symptoms.

- They assess safety, history, functioning, environment, risk, physical health, emotional patterns, and real-life context.

- They can ask follow-up questions, observe contradictions, notice dissociation, understand trauma responses, and create a care plan.

AI cannot fully do that. It may help organize thoughts, but it cannot take clinical responsibility for your care.

This is why any article about AI mental health privacy must also talk about safety.

If a person shares deeply sensitive emotional information with an app, chatbot, or platform, they need to know how that data is stored, used, protected, or shared.

“Technology may notice a pattern, but healing begins when a human feels safe enough to understand it.”

Why Pattern Detection Is Not the Same as Clinical Judgment

Pattern detection can be useful, but it is incomplete. If AI notices that someone is writing sad messages, sleeping badly, and withdrawing, that may be a warning sign. But it does not automatically explain why.

The cause may be burnout, grief, work stress, loneliness, relationship pain, trauma, hormonal changes, medical conditions, or many other factors.

This is the balanced truth: AI mental health detection can help people become aware earlier, but it should guide them toward safer reflection and proper support. It should never replace professional diagnosis, crisis care, or human connection.

For readers who want practical emotional support, AI emotional support tools may be useful when they are used gently. The safest role of AI is not to become your doctor. Its safest role is to help you pause, notice patterns, ask better questions, and reach human support when needed.

How AI Detects Depression, Anxiety, and Emotional Risk

How AI Detects Depression and Anxiety Patterns

AI mental health detection usually works by studying repeated patterns instead of one isolated moment. This is important because depression and anxiety do not always appear suddenly.

Many times, they build quietly through sleep changes, emotional language, low motivation, repeated worry, social withdrawal, and nervous system overload. A person may say, “I am fine,” while their behavior slowly shows that something inside is becoming heavier.

This is where AI can become useful as an early awareness tool. It may notice changes in words, voice tone, mood tracking, online behavior, activity level, or sleep data. But these patterns should always be treated as signals, not final truth.

AI detecting depression and anxiety can support reflection, but it should not replace clinical assessment, therapy, medical care, or crisis support.

For BBH, the strongest angle is simple: AI may notice emotional patterns, but the human being still needs safety, context, nervous system regulation, and proper care.

AI Detecting Depression Through Language and Voice

Depression can sometimes appear through the way a person writes or speaks. AI may notice repeated hopeless words, self-critical language, low emotional range, fewer social words, slower speech, long pauses, or reduced energy in communication.

These signals may suggest sadness, emotional exhaustion, or withdrawal, especially if they repeat over time.

However, AI must be careful here. A person may sound low because they are tired, grieving, physically unwell, stressed at work, or dealing with family pressure. That does not automatically mean clinical depression.

This is why AI mental health detection must be paired with human judgment. A tool can say, “This pattern may suggest emotional distress,” but it should not say, “You definitely have depression.”

This is also where AI for negative self-talk becomes relevant. If AI helps someone recognize repeated self-attack, shame-based thinking, or confidence loss, it can guide the person toward healthier reflection before the pattern becomes stronger.

Slower Speech, Negative Wording, Low Emotional Range, and Withdrawal Signals

AI systems may study voice speed, pitch, pauses, emotional intensity, and word choice. In written text, they may look for themes such as guilt, hopelessness, exhaustion, isolation, and low self-worth.

In behavior, they may notice reduced activity, less communication, and fewer positive emotional expressions.

But one signal alone is weak. The safer approach is trend-based detection. If sleep drops, communication changes, self-talk becomes harsher, and motivation lowers at the same time, the pattern becomes more meaningful.

Even then, it is not a diagnosis. It is a reason to pause, check in, and consider human support.

AI Detecting Anxiety Through Behavior and Body Data

Anxiety often appears through repeated worry loops, reassurance-seeking, body tension, restlessness, overthinking, poor sleep, and difficulty feeling safe.

AI mental health tools may detect anxiety-related patterns in search behavior, journaling entries, chatbot conversations, wearable data, or app-based mood tracking.

For example, someone may repeatedly ask fear-based questions, check symptoms often, write late-night messages, or show sleep disruption.

AI may identify these patterns as possible anxiety signals. This can be helpful because many people do not realize how often their mind is returning to the same fear loop.

Still, AI detecting depression and anxiety must remain careful. Anxiety is not only a thought problem. It is often a nervous system state.

A person may know logically that they are safe, but their body may still feel under threat. That is why the BBH approach should not only explain AI tools; it should also explain body-based regulation, emotional safety, and practical grounding.

Restlessness, Sleep Disruption, Typing Patterns, and Stress Signals

Some AI tools may study typing speed, typing errors, response timing, phone use, sleep patterns, movement, and stress signals.

Wearables may track heart rate, sleep quality, activity, and body stress. These patterns may suggest that a person is under pressure.

But these signals can be misunderstood. High heart rate can come from exercise, caffeine, illness, poor sleep, or excitement. Late-night phone use can come from work, caregiving, or habit.

This is why AI mental health detection should never create panic. The safest message is: “This pattern may be worth noticing.”

For readers who want to understand emotional reaction patterns more practically, AI tools to control emotions can be linked as a helpful internal resource because it connects AI support with pausing before reacting.

Can Wearables Help AI Detect Mental Health Risk?

Wearables may become one of the most common sources of AI mental health data. Smartwatches, fitness trackers, sleep apps, and stress monitors can collect information about sleep duration, sleep quality, heart rate, movement, breathing, and activity.

When AI studies these patterns over time, it may notice changes that are linked with stress, anxiety, burnout, or depressive symptoms.

This can help people become more aware of their body. Many people ignore emotional stress until it becomes physical.

They may not realize that their sleep has been disturbed for weeks, their body is staying tense, or their energy is dropping. Wearables can make invisible patterns more visible.

Sleep, Heart Rate, Movement, and Stress Tracking

Sleep is one of the strongest signals because emotional health and sleep are deeply connected.

- Anxiety may make it harder to fall asleep.

- Depression may affect sleep length, energy, or morning motivation.

- Stress may increase restlessness and reduce recovery.

- AI mental health tools may help users see these repeated patterns clearly.

Heart rate and movement data may also give useful clues. Lower activity over time may suggest low motivation or fatigue.

Higher stress signals may suggest nervous system activation. But these signals are not enough on their own. They must be interpreted with context.

Read Also : AI & CBT

Read Also : AI Therapy

Useful Signals, But Not a Diagnosis

Wearables can support awareness, but they cannot know the full story.

- A smartwatch cannot understand grief.

- A sleep app cannot understand relationship pain.

- A stress score cannot know whether someone is facing financial pressure, trauma reminders, workplace burnout, or loneliness.

This is why AI mental health detection should be framed as a support system, not a truth machine.

It can help a person ask better questions:

- “Why am I sleeping badly?”

- “Why is my body tense?”

- “Why am I withdrawing?”

- “Do I need support?”

Can AI Predict a Mental Health Crisis?

This is one of the most sensitive parts of AI mental health detection. Some AI systems may try to identify crisis-risk patterns through language, behavior, isolation, hopelessness, or sudden changes.

This can be helpful if it leads to timely human support, but it can also become dangerous if people believe AI can predict crisis with certainty.

A mental health crisis is complex. It may involve depression, trauma, substance use, relationship loss, financial distress, shame, isolation, impulsivity, or sudden emotional overwhelm.

AI may identify risk signals, but it cannot fully know what a person will do next.

Why Crisis Prediction Is Difficult

Crisis prediction is difficult because human emotions change quickly. A person may sound calm while struggling deeply. Another person may use intense words but not be in immediate danger. AI can miss quiet suffering and also create false alarms.

That is why AI mental health crisis prediction must always include human review, emergency pathways, and clear safety instructions. If someone is at risk of harming themselves or others, the answer is not more chatbot conversation.

The answer is immediate human support through local emergency services, crisis lines, doctors, therapists, or trusted people nearby.

False Positives, Missed Signals, and Emergency Safety Limits

False positives happen when AI flags risk where there is no immediate crisis. Missed signals happen when AI fails to identify serious danger.

Both are serious. A false alarm can create fear and mistrust. A missed signal can delay help.

This is why AI tools must avoid overconfidence. They should guide users toward safety, not certainty.

A responsible tool should say: “If you feel unsafe or may harm yourself, contact emergency support now.”

This simple safety boundary protects the user better than dramatic AI predictions.

AI Mental Health Detection in the USA, UK, Canada, and Australia

In the USA, UK, Canada, and Australia, people are increasingly looking for digital mental health support because therapy can be expensive, waitlists can be long, and emotional distress is common.

AI mental health tools may feel private, fast, and always available. That is why search demand around AI therapy tools, AI emotional support tools, and mental health chatbots is growing.

But these countries also have serious expectations around privacy, safety, and responsible health information. Readers want practical support, but they also want to know whether their data is protected and whether AI advice can be trusted.

Why These Countries Search for AI Mental Health Tools

Many readers search this topic because they want early answers. They may feel anxious but not ready for therapy.

They may feel depressed but not know how to explain it. They may be in a trauma bond, emotional abuse cycle, or negative self-talk loop and want help understanding what is happening.

This is where AI for narcissistic abuse recovery can support the article’s internal linking.

AI may help identify repeated confusion, self-blame, trauma bond patterns, and emotional abuse signals. But again, it should support awareness, not become the final authority.

Access Problems, Therapy Wait Times, Privacy Concerns, and Digital Support Demand

AI mental health tools are attractive because they are accessible, private-feeling, and immediate.

But the deeper question is not only “Can AI help?”

The better question is: “Can AI help safely?”

The safest answer is balanced. AI can support early awareness, emotional reflection, and pattern recognition. It can help someone notice anxiety loops, depressive language, trauma bond confusion, or emotional overload. But when symptoms are serious, repeated, or dangerous, human support is not optional.

That is the real message of this article: AI mental health detection may help people see patterns earlier, but healing still needs privacy, context, professional care, and a human nervous system that feels safe enough to recover.

Privacy, Safety, and the Human Side of AI Mental Health Detection

AI Mental Health Privacy: What Users Must Understand

AI mental health privacy is one of the most important parts of this discussion because mental health data is not ordinary data.

When a person talks to an AI tool about sadness, anxiety, trauma, self-doubt, relationship pain, panic, loneliness, or crisis thoughts, they may share information they have never told another human being. That makes privacy and emotional safety deeply important.

AI mental health detection may use text, voice, sleep data, wearable signals, mood records, app behavior, and chatbot conversations.

These details can reveal emotional patterns, daily habits, fears, personal struggles, and even moments of vulnerability. If this data is not protected properly, the user may feel exposed instead of supported.

This is why readers in the USA, UK, Canada, and Australia should not only ask, “Can AI detect mental health problems?” They should also ask, “What happens to my mental health data after I share it?”

Mental Health Data Is Deeply Sensitive

Mental health data is personal because it connects to identity, relationships, safety, work life, family life, and medical care.

A person may write something in a vulnerable moment and later worry about who can see it, how long it is stored, whether it is used for training, or whether it may affect future decisions.

AI mental health tools should clearly explain privacy policies, data storage, deletion options, and limits of confidentiality. If a platform is unclear about how it handles sensitive conversations, users should be careful

. The safest AI tool is not only smart; it is transparent, respectful, and designed around human protection.

Chats, Voice, Sleep, Search Behavior, and Emotional History Need Protection

The more data an AI tool collects, the more careful it must be.

- Chat history can reveal emotional pain.

- Voice data can reveal distress.

- Sleep data can reveal nervous system imbalance.

- Search patterns can reveal fear, shame, or crisis thinking.

- Emotional history can reveal deeply private life struggles.

This does not mean people should avoid all AI mental health tools. It means they should use them with awareness.

AI can support reflection, but users should avoid sharing highly identifiable or extremely sensitive information unless they trust the platform, understand its privacy rules, and know the tool’s limitations.

The Risks of AI Mental Health Detection

AI mental health detection can be helpful, but it also carries real risks. The first risk is overconfidence. If an AI tool sounds certain, users may believe it more than they should.

A person might think, “The tool says I have depression,” or “The tool says I am not at risk,” when neither statement should be treated as final medical truth.

The second risk is emotional dependence. If someone starts using AI as their only source of comfort, they may avoid human support, therapy, medical help, or trusted relationships. AI may feel available and nonjudgmental, but it cannot fully replace human presence.

The third risk is unsafe advice. Mental health is complex, and a tool that gives generic suggestions may miss serious symptoms, trauma history, medication issues, crisis risk, or medical causes behind emotional distress.

Wrong Alerts, Bias, Overdependence, and Unsafe Advice

AI can make mistakes. It may flag a normal stress reaction as a major warning. It may miss quiet depression. It may misunderstand culture, language, humor, trauma responses, or spiritual expression. It may also reflect bias from the data used to train it.

This matters because people from different backgrounds express distress differently.

- Some people speak directly.

- Some hide pain.

- Some use emotional language.

- Some minimize suffering.

- Some express stress through body symptoms rather than words.

AI mental health detection must be humble enough to admit that human beings are more complex than digital signals.

Why AI Tools Need Human Review and Ethical Safeguards

The safest future is not AI replacing mental health care. The safest future is AI supporting early awareness while trained humans remain responsible for diagnosis, safety, therapy, and crisis decisions. AI should guide users toward better reflection, not trap them inside a digital loop.

For example, if a tool detects repeated hopeless language, it should encourage safe human support. If it detects anxiety patterns, it can suggest grounding, journaling, or talking to a professional.

But if the person may be in immediate danger, the tool must direct them toward emergency help, crisis support, or a trusted person nearby.

How to Use AI Mental Health Tools Safely

The best way to use AI mental health tools is to treat them as reflection support, not medical authority.

AI can help you organize thoughts, name emotions, notice repeated patterns, prepare questions for therapy, or understand why your nervous system feels overwhelmed.

It can help you pause before reacting and turn vague emotional confusion into clearer language.

But AI should not be the final judge of your mental health. If symptoms are intense, repeated, worsening, or connected to self-harm, substance use, trauma, panic, severe depression, or loss of daily functioning, professional care is needed.

AI can be part of the support system, but it should never become the whole support system.

Use AI for Reflection, Not Final Diagnosis

A safe question to ask AI is: “Can you help me understand possible patterns in my thoughts?”

A risky question is: “Tell me exactly what mental illness I have.”

A safe use is asking AI to help you track mood, prepare a therapy note, reframe harsh self-talk, or recognize anxiety loops.

A risky use is accepting an AI label without professional evaluation. This difference matters because mental health requires context, history, safety assessment, and human care.

Readers who need a gentle beginning can use Start Here – Your Journey to Mental Clarity & Emotional Healing as a practical support path.

This helps connect AI awareness with a broader healing journey instead of leaving the reader alone with a tool.

When to Contact a Therapist, Doctor, Crisis Line, or Trusted Person

You should contact a licensed professional or trusted support person when emotional symptoms interfere with daily life, relationships, sleep, work, decision-making, or safety.

If you feel at risk of harming yourself or someone else, contact local emergency services or crisis support immediately.

AI mental health detection can point toward warning signs, but immediate safety must always come first. AI should not delay help. It should make help easier to reach.

For readers exploring digital support, AI Therapy & Self-Help Tools can be placed here as a support page. It gives the article a safer next-step path and strengthens BBH’s AI mental health cluster.

Final Answer: Can AI Detect Mental Health Problems?

Yes, AI can detect some mental health-related patterns. It may notice language changes, sleep disruption, withdrawal signals, stress markers, emotional distress, negative self-talk, or anxiety loops. It may help with early awareness, especially when patterns repeat over time.

But AI cannot fully detect the human soul behind the symptoms.

- It cannot understand every life event, trauma memory, cultural pressure, relationship wound, family history, medical condition, or nervous system response.

- It can analyze signals, but it cannot carry the full responsibility of care.

This is why AI mental health detection should be used as support, not replacement. It can help people become more aware, but diagnosis and treatment should remain with qualified professionals.

Yes, AI Can Detect Patterns — But Not Your Full Human Reality

The most honest answer is balanced. AI can help detect patterns linked to depression, anxiety, stress, emotional overload, and possible crisis risk. But it should never be treated as perfect, private by default, or clinically final.

This is also why internal support articles like AI therapy tools have benefits and risks are important. They help readers understand that AI can be useful, but only when safety, privacy, and human judgment stay central.

The Safest Future Is AI Support Plus Human Care

The future of mental health should not be humans versus AI. The better future is AI plus human wisdom. AI can help people notice patterns earlier. Human care can help people understand those patterns deeply.

AI can organize information. A therapist, doctor, or trusted person can provide safety, empathy, and responsibility.

The real value of AI mental health detection is not that it can replace healing. Its value is that it may help someone pause sooner, notice their emotional state earlier, and reach the right kind of support before suffering becomes heavier.

For BBH, the final message is clear: use AI as a mirror, not as a master. Let it help you notice patterns, but let human care, nervous system safety, privacy, and professional support guide the healing path.

People Also Ask

1. Can AI detect mental health problems?

AI mental health detection can identify patterns linked to stress, depression, anxiety, sleep changes, language shifts, and emotional distress. But AI cannot confirm a mental health diagnosis without professional clinical assessment.

2. Can AI detect depression and anxiety?

AI detecting depression and anxiety may work through voice tone, text patterns, mood tracking, wearable data, and behavior changes. These signals can support early awareness, but they should not replace a therapist, doctor, or licensed mental health professional.

3. Can AI predict a mental health crisis?

AI may detect crisis-risk signals such as hopeless language, withdrawal, sudden behavior changes, or repeated distress patterns. But crisis prediction is not perfect, so immediate safety should always depend on human help, crisis lines, or emergency services.

4. Are AI mental health tools safe?

AI mental health tools can be useful for reflection, journaling, emotional support, and self-awareness. They become risky when users treat them as diagnosis, therapy replacement, or crisis support without human care.

5. What are the privacy risks of AI mental health apps?

AI mental health privacy matters because chats, mood records, sleep data, voice data, and emotional history are deeply sensitive. Users should check privacy policies, data storage, deletion options, and whether the app shares or trains on personal data.

FAQ Section

1. What is AI mental health detection?

AI mental health detection means using artificial intelligence to identify patterns that may be linked with emotional distress, depression, anxiety, or crisis risk. It is pattern recognition, not a final medical diagnosis.

2. Can AI replace a therapist?

No, AI cannot replace a therapist because therapy requires human empathy, clinical judgment, safety assessment, and professional responsibility. AI can support reflection, but serious symptoms need qualified human care.

3. How does AI detect mental health signs?

AI may analyze language, voice tone, sleep changes, wearable data, app behavior, and mood patterns. These signals may help identify early warning signs, but they still need context and human interpretation.

4. Is AI good for emotional support?

AI can help with journaling, calming prompts, emotional validation, and organizing thoughts. But it should be used as a support tool, not as the only source of care during crisis, trauma, or severe distress.

5. Should I share personal mental health details with AI?

Share carefully. Mental health data is sensitive, so avoid giving unnecessary personal details unless you trust the platform and understand its privacy policy, data storage, and deletion options.

External References With URL

- National Institute of Mental Health — Technology and the Future of Mental Health Treatment

NIMH explains that mental health apps have potential, but there is uncertainty around effectiveness, regulation, and trustworthiness. (National Institute of Mental Health) - ScienceDirect — Enhancing Mental Health With Artificial Intelligence

This review discusses AI use in mental healthcare, including current applications, future directions, and ethical considerations. (ScienceDirect) - APA — Use of Generative AI Chatbots and Wellness Applications

APA provides consumer safety guidance for using AI chatbots and wellness apps for mental health support. (American Psychological Association) - Stanford HAI — Exploring the Dangers of AI in Mental Health Care

Stanford highlights safety concerns around AI therapy chatbots, including harmful responses and stigma-related risks. (Stanford HAI) - PMC Review — Artificial Intelligence for Mental Health

This review explains AI applications in mental health, including screening, NLP-based detection, ethics, and clinical limitations. (pmc.ncbi.nlm.nih.gov)